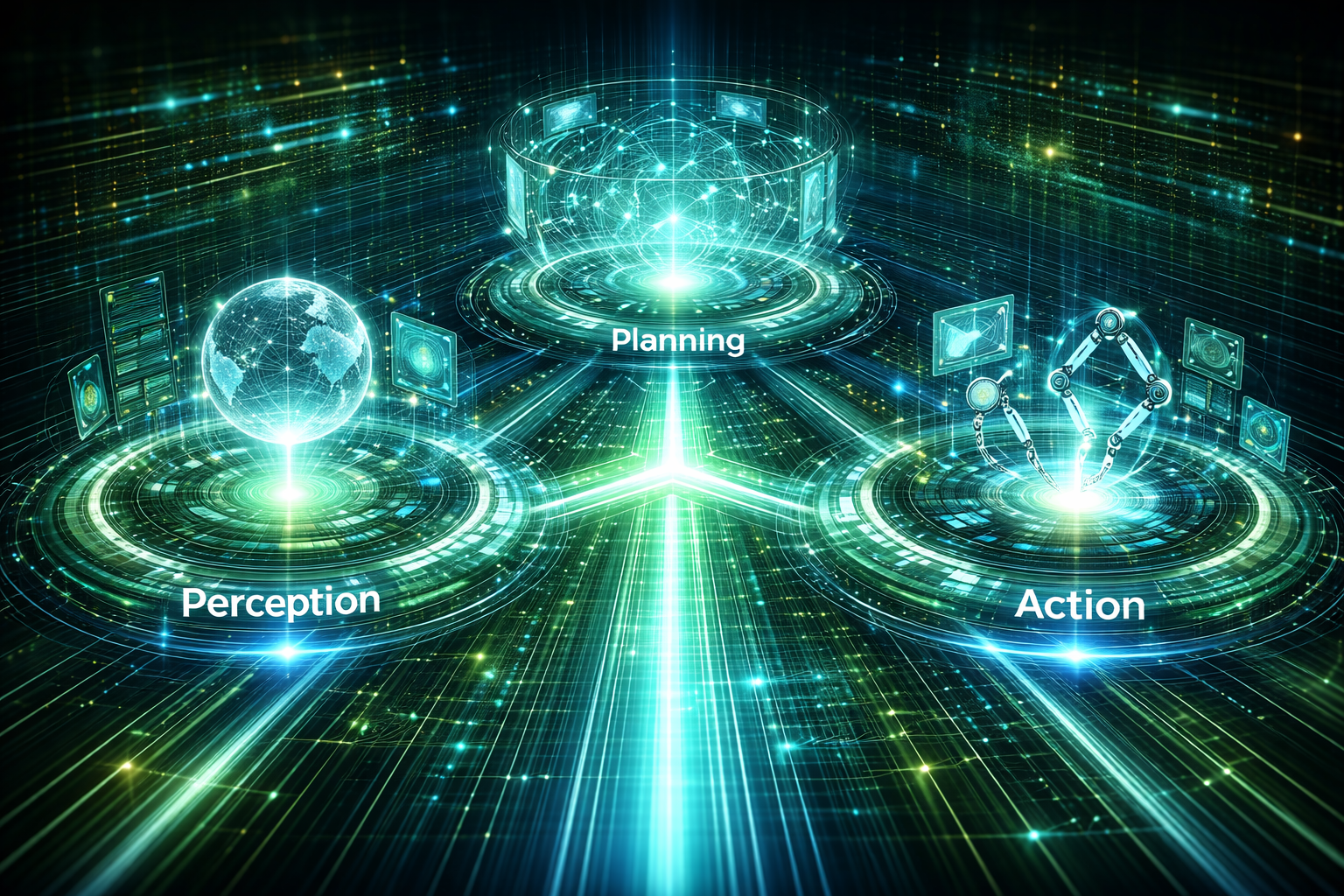

Core Agent Capabilities - Perception, Planning, and Action

Agentic AI systems are often described in terms of autonomy, speed, or intelligence. In practice, none of these qualities matter unless the agent can reliably perceive its environment, plan coherent courses of action, and execute those actions within defined boundaries. These three capabilities — Perception, Planning, and Action — form the irreducible core of every agentic system, regardless of domain or sophistication.

For leaders and architects, understanding these capabilities is not an academic exercise. Each capability introduces distinct architectural choices, operational risks, and governance responsibilities. Weakness in any one of them destabilizes the entire system.

Perception: Constructing a Trustworthy View of Reality

Perception is the agent’s ability to observe, interpret, and contextualize signals from its environment. This includes structured data, unstructured inputs, system events, user interactions, and external signals such as APIs or sensors.

In human organizations, perception is filtered through experience and judgment. In agentic systems, perception is mechanized and therefore brittle if poorly designed.

Practical Considerations

Signal Selection Matters More Than Volume

Agents do not need “more data”; they need relevant, timely, and reliable signals. Excessive inputs increase noise and ambiguity rather than insight.

Contextualization Over Raw Ingestion

Raw events must be enriched with business context: ownership, priority, historical patterns, and policy relevance. Without context, perception degenerates into pattern matching.

Trust and Provenance

Not all signals are equally trustworthy. Mature systems assign confidence levels, track data lineage, and distinguish between authoritative and advisory inputs.

Most agent failures begin at the perception layer. If the agent sees the world incorrectly, every downstream decision will be logically sound—and strategically wrong. Leaders should treat perception as a governance concern, not merely a data engineering task.

Planning: Translating Intent into Coherent Decisions

Planning is the capability that transforms perception into structured intent: goals, options, trade-offs, and sequences of actions. This is where alignment is either preserved or lost.

Planning is not just “deciding what to do next.” It is deciding why something should be done, under what constraints, and with what acceptable risk.

Practical Considerations

Explicit Goals and Constraints

Agents require clearly defined objectives and non-negotiable boundaries. Ambiguous goals lead to unintended optimization.

Short-Term Actions vs. Long-Term Outcomes

Effective planning balances immediate efficiency with downstream consequences. Without this, agents optimize locally and fail globally.

Fallbacks and Escalation Paths

Planning must include conditions under which the agent defers, pauses, or escalates to humans. Autonomy without exit ramps is operational negligence.

Planning is where leadership intent must be encoded most precisely. If leaders cannot articulate priorities, trade-offs, and risk tolerance in machine-interpretable terms, the agent will invent its own logic—and defend it with perfect consistency.

Action: Executing Safely in the Real World

Action is where agentic systems stop being theoretical and start having consequences. Actions may include API calls, system changes, communications, financial transactions, or triggering workflows across the enterprise.

Execution is deceptively simple and disproportionately dangerous.

Practical Considerations

Authority Separation

The ability to recommend an action must be separated from the authority to execute it. Not all actions should be autonomous, even if they are well-planned.

Rate Limiting and Scope Control

Agents should act within predefined limits: frequency, magnitude, and blast radius. This prevents cascading failures at machine speed.

Observability and Reversibility

Every action must be traceable, auditable, and—where possible—reversible. If you cannot explain or undo an action, the system is not production-ready.

Execution reveals the true cost of misalignment. Leaders often discover governance gaps only after agents act correctly according to their design—but incorrectly according to business reality. Action layers should therefore be designed conservatively, with intentional friction.

The Capability Chain Is Only as Strong as Its Weakest Link

Perception, Planning, and Action are not independent modules. They form a continuous decision loop:

- Perception defines what the agent believes is happening.

- Planning defines what the agent believes should be done.

- Action defines what actually changes the world.

Over-investing in one capability while neglecting the others creates fragile systems. Advanced planning models cannot compensate for poor perception. Careful execution controls cannot fix misaligned planning.

Final Thought for Leaders

Agentic AI does not fail because it lacks intelligence. It fails because it lacks disciplined design around these core capabilities.

The organizations that succeed with Agentic AI will not be those with the most advanced models, but those that treat perception as truth management, planning as intent governance, and action as a controlled privilege—not an entitlement.

Autonomy is not a feature. It is an operational responsibility, distributed across these three capabilities.

Disclaimer: This post provides general information and is not tailored to any specific individual or entity. It includes only publicly available information for general awareness purposes. Do not warrant that this post is free from errors or omissions. Views are personal.