The Three Building Blocks of an AI Agent - Models, Tools, and Orchestration

Image Source: Google Agents Whitepaper

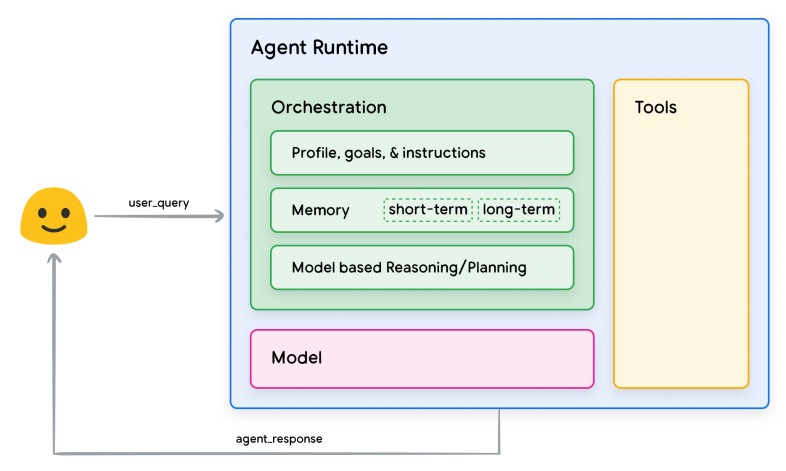

AI agents are often described in abstract terms — “autonomous,” “intelligent,” or “goal-driven.” In practice, however, an AI agent is not a monolithic entity. It is a composed system, built from three foundational components:

- The Model – the reasoning and language engine

- The Tools – the agent’s means of acting on the world

- The Orchestration Layer – the control system that governs behavior over time

Understanding these components and their boundaries is essential for designing reliable, scalable, and governable agentic systems.

At the core of every AI agent sits a foundation model (typically a large language model, or LLM). Its responsibilities are precise and limited:

- Interpret inputs (user prompts, system messages, state summaries)

- Reason about goals, constraints, and next steps

- Generate structured outputs (plans, decisions, tool calls, responses)

What the model does well

- Pattern recognition over language and symbols

- Multi-step reasoning when properly prompted

- Decision-making under uncertainty, given context

What the model does not do

- It does not execute actions

- It does not maintain long-term state reliably on its own

- It does not enforce business rules, safety policies, or permissions

This distinction is critical. Treating the model as the “brain” is acceptable, but treating it as the system is a design error. Models are probabilistic predictors, not deterministic controllers.

In production-grade agents, models are replaceable components — they can be upgraded, swapped, or specialized (e.g., planner models vs. summarization models) without rewriting the entire system.

Tools: Controlled Interaction With the World

If the model is the reasoning engine, tools are the hands.

A tool is any external capability the agent can invoke, such as:

- APIs (databases, CRMs, ticketing systems)

- Code execution environments

- Search and retrieval systems

- Internal microservices

- Workflow engines

Tools convert intent into impact.

Why tools matter

Without tools, a model can only talk. With tools, an agent can:

- Fetch real-time data

- Create or modify records

- Trigger workflows

- Interact with other systems

The design principle: tools are explicit

Well-designed agents never allow the model to “improvise” actions. Instead:

- Tools have defined schemas

- Inputs and outputs are structured

- Permissions and scopes are enforced outside the model

For example, an agent may decide to issue a refund but the refund logic, limits, approvals, and audit logging live in the tool, not in the model’s reasoning.

This separation ensures:

- Safety

- Auditability

- Predictable behavior

Orchestration: The Most Underrated Component

The orchestration layer is what turns a model-with-tools into an agent.

Orchestration governs:

- When the model is called

- Which model is used for which task

- How state is stored and updated

- How decisions evolve across steps

- How errors, retries, and escalations are handled

In practical terms, orchestration is responsible for control flow.

Key orchestration responsibilities

a. State management

Agents operate over time. Orchestration maintains:

- Conversation state

- Task progress

- Intermediate decisions

- Memory summaries

This state must be external to the model to be reliable.

b. Planning and execution loops

Most agents follow a loop:

- Observe state

- Reason (model)

- Act (tool)

- Observe result

- Decide next step

Orchestration enforces this loop and prevents runaway behavior.

c. Policy and guardrails

Business rules, safety constraints, rate limits, and human approval points live here—not in prompts alone.

d. Multi-agent coordination

In advanced systems, orchestration manages:

- Role separation (planner, executor, reviewer)

- Message passing between agents

- Conflict resolution

Without orchestration, autonomy becomes chaos.

Why These Components Must Be Separated

Separation of model, tools, and orchestration enables:

- Replaceability (swap models without rewriting tools)

- Governance (audit actions independently of reasoning)

- Reliability (deterministic control over execution)

- Scalability (parallel agents with shared infrastructure)

This mirrors established software architecture principles:

- Models resemble decision services

- Tools resemble bounded capabilities

- Orchestration resembles workflow engines

Agentic AI is not a departure from systems engineering—it is an extension of it.

A Practical Mental Model

If you are designing or evaluating an AI agent, ask three concrete questions:

- Model: What decisions is the model responsible for and what decisions is it not allowed to make?

- Tools: Which real-world actions are exposed, and how are they constrained?

- Orchestration: Who controls the sequence, state, and authority of actions over time?

If any one of these answers is vague, the system is fragile.

The future of agentic AI will not be determined by larger models alone but by how rigorously we design the boundaries between reasoning, action, and control.

Disclaimer: This post provides general information and is not tailored to any specific individual or entity. It includes only publicly available information for general awareness purposes. Do not warrant that this post is free from errors or omissions. Views are personal.