Mapping Agentic AI to Product Strategy - Part 3 - The Architecture of Agentic Products

This is the third article in the comprehensive series on the Mapping Agentic AI to Product Strategy. You can have a look at the previous installation at the below link:

1. Why Architecture Determines Strategy Execution

Strategy without architecture is aspiration.

Architecture without strategy is noise.

In an agentic world, the two cannot be separated.

A goal is only meaningful if the system can act on it.

An outcome is only achievable if the structure supports adaptation.

Many organizations misunderstand this point.

They integrate a large language model.

They expose a chat interface.

They declare themselves AI-powered.

But autonomy does not emerge from a single model.

It emerges from layered design.

Every architectural shift in computing reshaped organizations.

Mainframes centralized power.

Client-server distributed access.

Cloud decentralized infrastructure.

Agentic architecture redistributes decision authority.

Not just how systems compute.

But how they decide.

How they adapt.

How they align with strategy.

If Part 2 redefined strategy as a control system, Part 3 defines the structure that makes that control real.

Autonomy requires layers.

Integration across data.

Decision engines.

Execution tools.

Feedback loops.

Governance mechanisms.

Remove one layer and autonomy weakens.

Consider how Microsoft integrates Copilot across enterprise products.

It is not a single chatbot.

It connects to email.

Documents.

Calendars.

Security systems.

The intelligence sits on top of structured architecture.

Or consider OpenAI building agent frameworks.

The model alone is insufficient.

Agents require memory.

Tool access.

Execution logic.

Architecture determines whether strategy becomes operational reality.

This Part 3 introduces a structured blueprint.

A model you can use.

Audit.

Scale.

A disciplined architecture for agentic products.

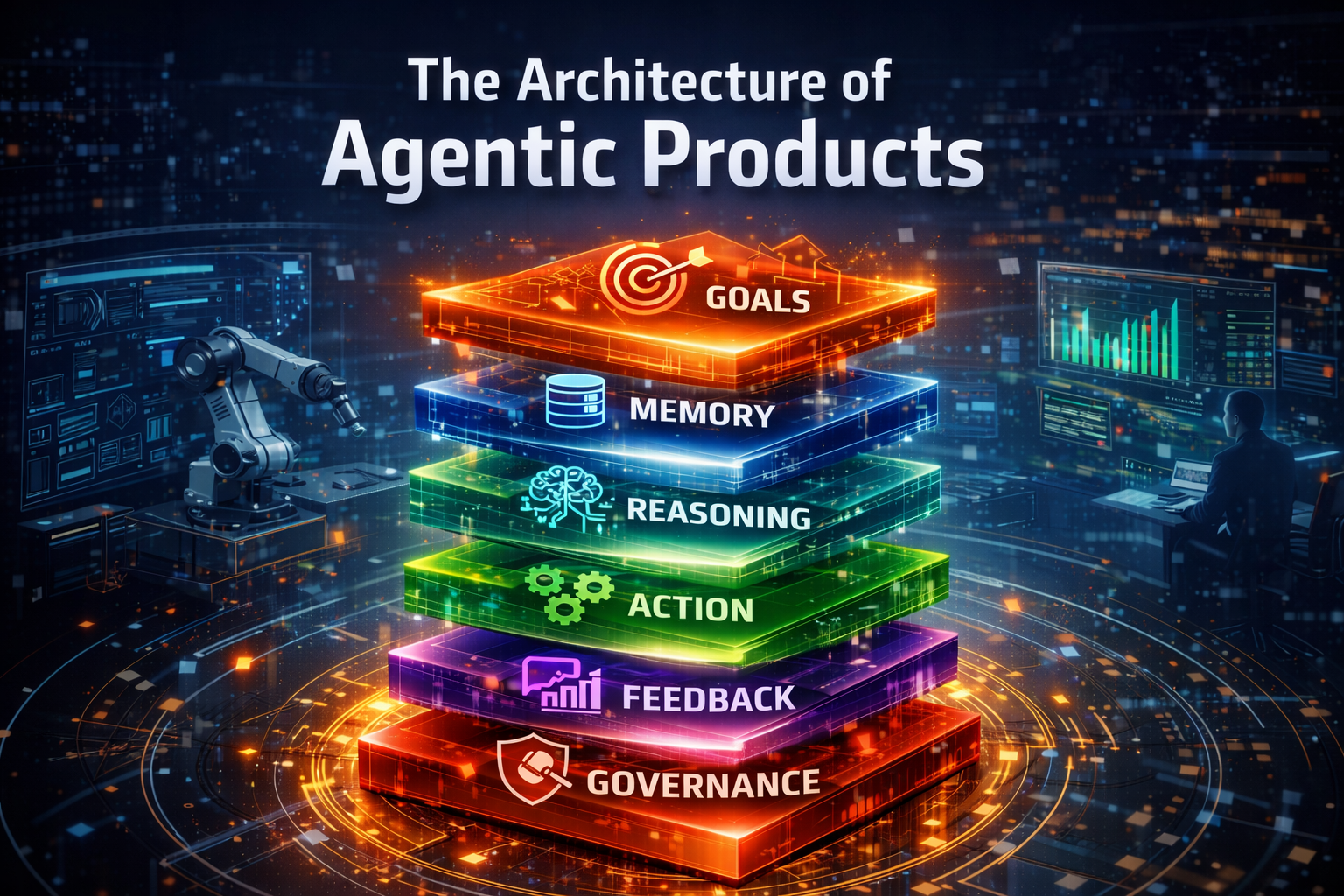

2. The Six-Layer Agentic Stack

Agentic products are layered systems.

Autonomy emerges from integration.

To structure this clearly, we define the Agentic Stack.

It has six logical layers:

- Goal Layer

- Memory Layer

- Reasoning Layer

- Action Layer

- Feedback Layer

- Governance Layer

Each layer has a function.

Each layer interacts with others.

Each layer must align with enterprise strategy.

If one layer is weak, the system degrades.

Let us examine each layer at a high level.

The Goal Layer defines intent.

It translates business objectives into machine-optimizable signals.

The Memory Layer provides context.

It stores user behavior.

System history.

Market information.

The Reasoning Layer plans decisions.

It evaluates options.

It simulates outcomes.

The Action Layer executes decisions.

It calls APIs.

It triggers workflows.

It modifies interfaces.

The Feedback Layer measures outcomes.

It captures signals.

It updates learning models.

The Governance Layer enforces boundaries.

It ensures compliance.

It protects brand integrity.

These layers are not sequential.

They form a loop.

Goals inform reasoning.

Reasoning uses memory.

Actions produce outcomes.

Feedback updates memory.

Governance monitors everything.

This is not theoretical.

It is practical.

Without this stack, autonomy remains superficial.

3. Goal Layer: Strategic Intent Encoding

The Goal Layer is the foundation.

Without clear goals, autonomy drifts.

Systems optimize what they measure.

If you measure poorly, they optimize poorly.

Translating Business KPIs into Machine Goals

Business leaders speak in outcomes.

Revenue growth.

Margin expansion.

Customer lifetime value.

Machines require signals.

Increase conversion rate by 5 percent.

Reduce churn probability by 3 percent.

Translation is critical.

Enterprise objective becomes product objective.

Product objective becomes optimization metric.

This is strategic encoding.

A vague ambition cannot guide an agentic system.

Clarity drives precision.

Hierarchical Goal Cascading

Goals must cascade.

Enterprise strategy defines direction.

Product strategy defines focus.

Operational metrics define daily optimization.

For example:

Enterprise goal: Increase profitability.

Product goal: Increase repeat purchases.

Micro goal: Improve recommendation relevance.

Cascading ensures coherence.

Local optimization must serve global outcomes.

If a recommendation system increases clicks but reduces trust, enterprise goals suffer.

Hierarchy prevents fragmentation.

Multi-Objective Optimization

Single metrics distort behavior.

If you optimize only engagement, quality may fall.

If you optimize only revenue, user experience may degrade.

Agentic systems must balance objectives.

Revenue.

Retention.

Brand integrity.

Risk exposure.

Balancing objectives requires weighted scoring.

It requires explicit trade-offs.

The Goal Layer defines those trade-offs.

Goal Drift Detection

Over time, systems can drift.

Optimization may gradually deviate from original intent.

Goal drift detection mechanisms monitor alignment.

Compare system behavior with strategic benchmarks.

Trigger alerts when divergence exceeds threshold.

Goal alignment is not one-time configuration. It is continuous supervision.

The Goal Layer is not static documentation. It is living architecture.

4. Memory Layer: Context as Competitive Asset

Memory creates intelligence.

Without memory, systems reset every interaction.

Agentic products require persistent context.

User Memory

User behavior reveals patterns.

Browsing history.

Purchase cycles.

Engagement frequency.

Persistent user memory enables personalization.

When a user returns, the system remembers.

It adapts recommendations.

It anticipates needs.

Consider Amazon.

Its recommendation engine relies on behavioral memory.

Every click influences future suggestions.

Memory compounds advantage.

System Memory

Systems must remember their own experiments.

Which A/B test improved conversion.

Which notification timing reduced churn.

Experiment history informs future hypotheses.

Without system memory, learning repeats mistakes.

With system memory, learning compounds.

Market Memory

Competitive data matters.

Pricing shifts.

Feature releases.

User sentiment.

Persistent market memory informs strategic adjustments.

Consider Spotify.

Its contextual playlists adapt based on listening trends.

Memory is not only user-level.

It is ecosystem-level.

Privacy and Governance

Memory creates risk.

Data privacy laws demand compliance.

Memory architecture must incorporate:

Data minimization.

Access controls.

Retention policies.

Trust depends on responsible memory design.

Memory is an asset.

It must be governed carefully.

5. Reasoning Layer: Decision Intelligence

The Reasoning Layer converts goals and memory into decisions.

It plans.

It evaluates.

It selects actions.

But more importantly, it prioritizes trade-offs, resolves conflicts between competing objectives, and determines when not to act.

This layer is where intelligence becomes operational.

Planning Mechanisms

Agentic systems must sequence actions.

Research first.

Then adjust interface.

Then monitor response.

Planning avoids random optimization.

Structured reasoning ensures coherence.

But planning in enterprise systems is more than sequencing tasks. It requires goal decomposition.

High-level objectives are broken into executable sub-goals.

Each sub-goal is mapped to measurable outcomes.

Dependencies are identified before execution begins.

Effective planning mechanisms include:

- Task graph construction

- Constraint-aware sequencing

- Multi-step workflow orchestration

- Parallelization where safe

Advanced systems incorporate dynamic replanning.

If assumptions fail, plans adjust.

If market signals change, execution pathways shift.

Planning is not static.

It is adaptive and state-aware.

Without structured planning, agentic systems degrade into reactive scripts.

With planning, they behave strategically.

Simulation Before Action

Before executing changes globally, simulate impact.

Model predicted retention shifts.

E

stimate revenue impact.

Simulation reduces risk.

It allows controlled experimentation.

In mature architectures, simulation occurs across multiple dimensions:

- Financial projection modeling

- User behavior prediction

- System performance forecasting

- Regulatory impact assessment

Simulation enables counterfactual reasoning.

What happens if we increase price by 3%?

What happens if we delay a feature rollout?

What happens if personalization intensity increases?

Enterprise agentic systems often use:

- Digital twins

- Monte Carlo simulations

- Scenario modeling engines

- A/B shadow testing environments

Simulation separates impulsive autonomy from disciplined intelligence.

It creates a buffer between reasoning and action.

In high-scale platforms, simulation capability becomes a competitive advantage.

Because the system learns safely before it acts publicly.

Confidence Scoring

Not every decision deserves autonomy.

Confidence thresholds determine escalation.

If confidence high, execute automatically.

If confidence low, escalate to human review.

Bounded autonomy protects enterprise risk posture.

Confidence scoring combines multiple signals:

- Model prediction probability

- Historical performance accuracy

- Data completeness

- Risk exposure classification

Confidence is not only statistical certainty.

It is contextual reliability.

A system may be 92% confident in a low-risk UI tweak.

That same confidence level may be unacceptable for financial pricing changes.

Therefore, confidence thresholds must be risk-adjusted.

Enterprises often define autonomy bands:

- Full automation zone

- Conditional automation zone

- Human-required zone

Confidence scoring ensures that autonomy scales responsibly.

Without it, the system either becomes overly cautious or dangerously reckless.

Human-in-the-Loop Escalation

Human oversight remains essential.

Escalation pathways must be defined.

High-risk pricing change.

Sensitive user communication.

Human review provides ethical and strategic judgment.

But escalation cannot be informal or ambiguous.

It must be architected.

Clear triggers must exist:

- Risk classification threshold exceeded

- Confidence below boundary

- Regulatory category triggered

- Ethical sensitivity detected

Escalation mechanisms should include:

- Structured review dashboards

- Decision explanation summaries

- Traceable reasoning logs

- Override and rollback capabilities

Human reviewers should not be overwhelmed with noise.

Escalation should be rare but meaningful.

The goal is not to slow the system.

The goal is to protect strategic integrity.

Autonomy is powerful.

But it must remain bounded.

And bounded autonomy is not a limitation.

It is a design principle.

6. Action Layer: Tooling and Execution

Intelligence without action is theory.

The Action Layer connects reasoning to execution.

It transforms decisions into system-level change.

It converts strategy into operational movement.

It is where autonomy touches the real world.

Without this layer, agentic architecture remains analytical rather than transformative.

API Integration

Agentic systems must interact with core systems.

Billing engines.

CRM platforms.

Marketing automation.

APIs enable execution.

Without integration, autonomy is cosmetic.

But enterprise-grade API integration requires more than connectivity.

It requires reliability, authentication, observability, and governance.

Action endpoints must support:

- Idempotent operations

- Rate limiting

- Secure authentication (OAuth, signed tokens)

- Version compatibility management

Agentic systems must understand operational constraints:

- Can pricing updates occur in real time?

- Are inventory systems eventually consistent?

- What are the transactional boundaries?

Integration must also account for system latency.

If the reasoning layer acts faster than downstream systems can process changes, instability emerges.

Resilient execution patterns include:

- Retry mechanisms with exponential backoff

- Circuit breakers

- Graceful degradation

The Action Layer is not simply “call an API.”

It is coordinated, fault-tolerant orchestration across enterprise systems.

True autonomy requires operational fluency.

External Tool Invocation

Agents may access search tools.

Analytics engines.

Payment gateways.

Tooling expands capability.

Execution must be secure.

External tools introduce power but also exposure.

Each tool invocation must be:

- Authorized

- Context-aware

- Logged

- Risk-classified

An agent calling a search engine is low-risk.

An agent initiating a financial transaction is high-risk.

Tool invocation frameworks should support:

- Tool selection logic

- Parameter validation

- Output validation

- Sandboxed execution environments

Agents must also understand when tool outputs are unreliable.

External systems may produce incomplete or noisy data.

Reasoning engines must validate before acting.

Capability expansion should not compromise governance.

Tooling should be modular.

Replaceable.

Version-controlled.

The Action Layer must evolve as tools evolve.

Because autonomy scales with the quality of its tools.

Safe Execution Patterns

Every action must be auditable.

Log changes.

Track triggers.

Enable rollback.

If a pricing experiment fails, revert quickly.

Execution without safeguards creates instability.

Enterprise-grade execution requires structured safety patterns:

- Transactional boundaries

- Feature flag gating

- Canary deployments

- Gradual rollouts

Every automated action should generate:

- Timestamp

- Decision trace reference

- Confidence score

- Risk classification

- Affected entities

This creates forensic traceability.

Rollback mechanisms must be predefined, not improvised.

Restore previous configuration state

Reverse financial adjustments

Disable triggered workflows

Additionally, “kill switches” should exist for high-risk automation zones.

If anomaly detection identifies unintended impact, autonomy must pause.

Safe execution does not slow innovation.

It enables sustainable innovation.

The Action Layer must combine agility with control.

Speed without safeguards leads to volatility.

Control without agility leads to stagnation.

Agentic execution demands both.

7. Feedback Layer: Continuous Learning Systems

Feedback converts action into improvement.

Without feedback, autonomy stagnates.

The Feedback Layer closes the loop between decision and outcome.

It measures impact.

It validates assumptions.

It recalibrates future behavior.

This layer determines whether the system merely acts or truly evolves.

Reinforcement Learning Loops

Actions generate rewards.

Positive outcomes increase probability of repetition.

Negative outcomes reduce future selection.

Reward design shapes system behavior.

But reward design must be intentional.

If rewards optimize only short-term clicks, the system may sacrifice long-term trust.

If rewards optimize only revenue, the system may erode user experience.

Reward functions must align with enterprise KPIs:

- Retention

- Margin

- Customer lifetime value

- Risk-adjusted return

Reinforcement loops require:

- Clearly defined reward signals

- Delayed reward attribution models

- Exploration vs exploitation balancing

Delayed rewards are especially complex.

A pricing change may impact churn months later.

A recommendation tweak may influence lifetime value over quarters.

The system must attribute outcomes across time horizons.

Well-designed reinforcement loops create strategic learning.

Poorly designed loops create metric gaming.

Autonomy improves only when rewards reflect true value creation.

Experimentation Infrastructure

A/B testing frameworks measure impact.

Control groups compare performance.

High-frequency experimentation increases learning speed.

But experimentation must scale beyond simple binary tests.

Modern agentic systems require:

- Multivariate testing

- Context-aware experimentation

- Segmented cohort analysis

- Longitudinal performance tracking

Infrastructure must support:

- Real-time metric dashboards

- Automated hypothesis generation

- Safe rollout gating

Shadow testing can validate decisions before global deployment.

Canary releases reduce systemic risk.

Experimentation infrastructure transforms intuition into measurable evidence.

Without structured experimentation, feedback becomes anecdotal.

With experimentation, learning becomes systematic.

Learning velocity becomes an operational capability instead of a side effect.

Signal Filtering

Not every signal reflects causation.

Noise must be filtered.

Statistical validation prevents false optimization.

User behavior is volatile.

Market conditions fluctuate.

External events distort metrics.

Signal filtering requires:

- Statistical significance testing

- Confidence interval analysis

- Drift detection

- Anomaly detection

Spurious correlations must be eliminated.

An engagement spike may result from seasonality, not personalization quality.

A revenue drop may stem from macroeconomic shifts, not algorithm error.

Feedback systems must differentiate:

- Correlation vs causation

- Temporary variance vs structural change

- Outlier behavior vs systemic trend

Without filtering, the system overreacts.

With filtering, the system adapts rationally.

Precision in learning determines stability in growth.

Feedback Velocity as Competitive Moat

Speed matters.

The faster feedback cycles operate, the faster advantage compounds.

Short cycles enable rapid iteration.

Rapid iteration improves model accuracy.

Improved accuracy enhances user experience.

Enhanced experience drives retention.

Consider Netflix.

Personalization models adjust continuously.

Engagement signals refine recommendations.

Feedback velocity drives retention.

But velocity alone is insufficient.

It must be paired with disciplined validation.

Organizations with weekly learning cycles outperform those with quarterly cycles.

Organizations with daily model updates outperform those with annual retraining.

Feedback velocity creates compounding intelligence.

Each loop improves the next loop.

Each iteration sharpens strategic precision.

Continuous learning separates leaders from followers.

Because in agentic systems, advantage does not come from one breakthrough.

It comes from thousands of rapid, validated improvements over time.

8. Governance Layer: Risk, Ethics, and Control

Governance is not bureaucracy.

It is structural necessity.

Autonomous systems amplify both intelligence and error.

The same system that optimizes revenue at scale can also propagate bias at scale.

The same engine that accelerates growth can also accelerate regulatory exposure.

Governance is the stabilizing force that ensures autonomy operates within acceptable bounds.

It defines what the system may do.

It defines what the system must not do.

It defines when human authority overrides machine execution.

Without governance, autonomy becomes volatility.

Risk Categorization

Decisions vary in risk.

Low-risk UI adjustment.

High-risk pricing change.

Categorize actions.

Define autonomy boundaries accordingly.

Risk categorization must be systematic, not intuitive.

Each action should be scored across dimensions such as:

- Financial impact

- Reputational exposure

- Regulatory sensitivity

- Customer harm potential

- Systemic dependency risk

Risk tiers can be defined:

- Tier 1: Low-risk, reversible optimization

- Tier 2: Moderate-risk, monitored autonomy

- Tier 3: High-risk, mandatory human approval

This classification informs execution policy.

Low-risk actions may execute automatically.

High-risk actions require multi-level authorization.

Risk categorization transforms governance from abstract policy into operational control.

Without structured risk modeling, escalation becomes inconsistent.

With structured risk modeling, autonomy scales responsibly.

Explainability

Traceability builds trust.

Log decisions.

Record reasoning context.

When regulators inquire, evidence must exist.

Explainability reduces uncertainty.

But explainability must go beyond surface-level logging.

Systems should capture:

- Input signals used in decision-making

- Goal prioritization state

- Confidence scores

- Alternative options considered

- Final selection rationale

Decision lineage must be reconstructible.

If a pricing model adjusts rates, the organization should answer:

- Why was this user affected?

- What signals influenced the change?

- Was bias tested?

Explainability enables internal accountability and external defensibility.

Opaque autonomy erodes stakeholder confidence.

Transparent autonomy strengthens institutional trust.

Explainability is not optional in enterprise environments. It is foundational.

Regulatory Compliance

Different regions impose different AI rules.

Data localization.

User consent.

Algorithm transparency.

Governance layer enforces compliance rules.

Compliance must be embedded at design time, not retrofitted.

Architectural controls should include:

- Data residency enforcement mechanisms

- Consent-aware personalization logic

- Automated compliance auditing

- Access control policies aligned with jurisdiction

Global enterprises must support multi-region governance models.

What is permissible in one country may be restricted in another.

AI systems must adapt accordingly.

Regulatory change is continuous.

Governance architectures must support policy updates without system redesign.

Compliance is not a constraint on innovation.

It is a boundary condition within which sustainable innovation occurs.

Ignoring regulatory architecture introduces existential risk.

Organizational Oversight

AI governance boards review policies.

Escalation mechanisms ensure accountability.

Consider Microsoft and its Responsible AI principles.

Governance must integrate into architecture.

Not remain policy on paper.

Oversight structures should include:

- Cross-functional review committees

- Ethical risk assessments

- Periodic model audits

- Bias testing protocols

Human oversight must have authority—not symbolic presence.

Governance roles should define:

- Who approves high-risk deployments

- Who monitors systemic drift

- Who halts automation during anomalies

Architectural hooks must exist to enforce oversight decisions.

If a governance board mandates suspension, the system must comply instantly.

Responsible AI frameworks, such as those formalized by organizations like Microsoft, demonstrate that governance principles must be operationalized through engineering discipline.

Governance is not an afterthought.

It is a co-equal layer in the agentic stack.

When embedded structurally, governance becomes an enabler of trust.

And trust is the ultimate foundation of scalable autonomy.

9. Case Study: Layered Architecture in Practice

Consider Amazon dynamic pricing and recommendations.

Let us map the layers.

Goal Layer:

Maximize conversion while protecting margin.

Memory Layer:

User browsing history.

Purchase behavior.

Inventory levels.

Reasoning Layer:

Elasticity modeling.

Demand forecasting.

Action Layer:

Adjust price.

Update recommendation placement.

Feedback Layer:

Track conversion response.

Measure profit impact.

Governance Layer:

Compliance checks.

Fair pricing policies.

Each layer supports the next.

Remove memory and personalization collapses.

Remove governance and regulatory risk increases.

The architecture sustains autonomy.

10. Designing for Scale: Enterprise Agentic Infrastructure

Single-product autonomy is powerful.

Enterprise-wide autonomy is transformative.

When multiple products, business units, and regions operate with coordinated intelligence, the organization evolves from isolated optimization to systemic advantage.

Scaling agentic systems is not about deploying more models.

It is about architecting an infrastructure that sustains autonomy across the enterprise.

Centralized vs Distributed Agents

Central orchestrator models centralize intelligence.

Distributed agent meshes decentralize execution.

Both models require coordination.

In centralized architectures, a master orchestrator manages planning, prioritization, and policy enforcement.

This approach simplifies governance and ensures strategic consistency.

However, centralization may introduce bottlenecks.

Latency increases.

Domain specialization weakens.

Distributed agent meshes, by contrast, allow domain-specific agents to operate autonomously within bounded contexts.

Product agents optimize conversion.

Pricing agents manage elasticity.

Support agents refine customer satisfaction.

Distributed models increase resilience.

If one agent fails, the system continues operating.

But decentralization introduces complexity:

- Cross-agent communication protocols

- Conflict resolution mechanisms

- Shared goal alignment

- Hybrid models often emerge as optimal.

A central policy layer defines objectives and governance constraints.

Distributed agents execute within those constraints.

Scale requires architectural clarity on where intelligence resides and how coordination occurs.

Shared Memory Fabric

Enterprise data lakes support cross-product intelligence.

Shared vector stores enable semantic retrieval.

Memory sharing accelerates learning across teams.

But shared memory must be structured, not chaotic.

A shared memory fabric should include:

- Structured operational data

- Unstructured contextual data

- Historical experiment outcomes

- Cross-product performance metrics

This enables knowledge transfer.

If one product learns that a pricing tactic reduces churn in a segment, that insight can propagate enterprise-wide.

Shared memory also prevents duplication of learning.

Teams do not repeatedly test what has already been invalidated.

However, shared memory must respect data boundaries.

- Tenant isolation

- Jurisdictional data constraints

- Access control policies

Semantic retrieval systems powered by vector stores allow agents to query enterprise knowledge in context-aware ways.

Memory becomes a strategic asset when it is reusable, governed, and interoperable.

Without shared memory, autonomy fragments.

With shared memory, autonomy compounds.

Platformization

Reusable SDKs reduce duplication.

Internal AI platforms standardize governance.

Scalable architecture ensures consistency.

Platformization transforms isolated agent implementations into enterprise capabilities.

Core components should be abstracted into reusable modules:

- Goal definition frameworks

- Memory connectors

- Reasoning orchestration engines

- Tool invocation adapters

- Governance enforcement APIs

An internal AI platform provides:

- Standardized deployment pipelines

- Monitoring dashboards

- Risk classification tooling

- Audit logging infrastructure

This reduces shadow AI initiatives.

It prevents fragmented experimentation.

Platformization also accelerates innovation.

New teams can launch agentic capabilities without rebuilding foundational layers.

They focus on domain differentiation rather than infrastructure recreation.

Enterprise autonomy becomes sustainable only when supported by shared platforms.

Otherwise, scale produces inconsistency.

Cost Governance

Inference costs accumulate.

Optimization strategies include:

- Model selection.

- Caching strategies.

- Batch processing.

Financial discipline sustains autonomy at scale.

Agentic systems may generate thousands or millions of inference calls daily.

Without cost controls, operational expenditure escalates rapidly.

Cost governance requires visibility.

- Per-agent cost tracking

- Per-decision cost attribution

- Cost-to-value ratio analysis

Optimization techniques include:

- Tiered model routing (use smaller models when sufficient)

- Response caching for repeated queries

- Precomputation for predictable tasks

- Asynchronous processing where latency tolerance exists

Architectural decisions influence cost structure.

A poorly designed feedback loop may trigger unnecessary retraining.

An overly complex reasoning chain may inflate token usage.

Cost efficiency must be considered alongside accuracy and latency.

Enterprise-scale autonomy demands economic sustainability.

Intelligence must be not only powerful.

It must be economically rational.

When cost governance is embedded structurally, autonomy becomes durable rather than experimental.

Closing Reflection

Architecture shapes destiny.

Strategy without structure collapses.

Agentic products require layered design.

Goals provide direction.

Memory provides context.

Reasoning provides intelligence.

Action provides execution.

Feedback provides learning.

Governance provides control.

Remove one layer and autonomy weakens.

Integrate all layers and the system adapts.

This is not speculative.

It is structural inevitability.

As autonomy scales, products become ecosystems.

As ecosystems mature, organizations evolve.

The leaders who understand architecture will shape the future.

The leaders who ignore it will struggle to contain it.

Agentic architecture is not optional.

It is the foundation of competitive strategy in the decade ahead.

References & Further Reading

- What is Agentic AI? (IBM)

- Agentic AI Overview (UiPath)

- Agentic AI Architecture: Types & Best Practices (Exabeam)

- Building the Layers of a Production-Grade Agentic AI System

- 8 Architectural Layers of Agentic AI (Medium)

- Agentic AI Architecture Survey

- Agentic AI: A Review of Emerging Architectures

- How Agentic AI Works (Kore.ai)

- Agentic AI: Definition & Technical Overview

- The 7 Layers of the Agentic AI Stack

- AI Agent Architecture: Tutorial & Best Practices

- Agentic Layers of AI Integration (Boomi)

- What is Agentic AI? (Red Hat)

- Multi-Agent AI Systems with Memory & Collaboration

- Open Agent Architecture (Wikipedia)

Disclaimer: This post provides general information and is not tailored to any specific individual or entity. It includes only publicly available information for general awareness purposes. Do not warrant that this post is free from errors or omissions. Views are personal.