Mapping Agentic AI to Product Strategy - Part 6 - Autonomous Post-Launch Optimization - Designing Perpetual Value Loops

This is the sixth article in the comprehensive series on the Mapping Agentic AI to Product Strategy. You can have a look at the previous installation at the below link:

1. The Myth of “Launch Complete”

Launch has long been celebrated.

Teams work for months.

Features are built.

Marketing prepares campaigns.

Release day arrives.

Applause follows.

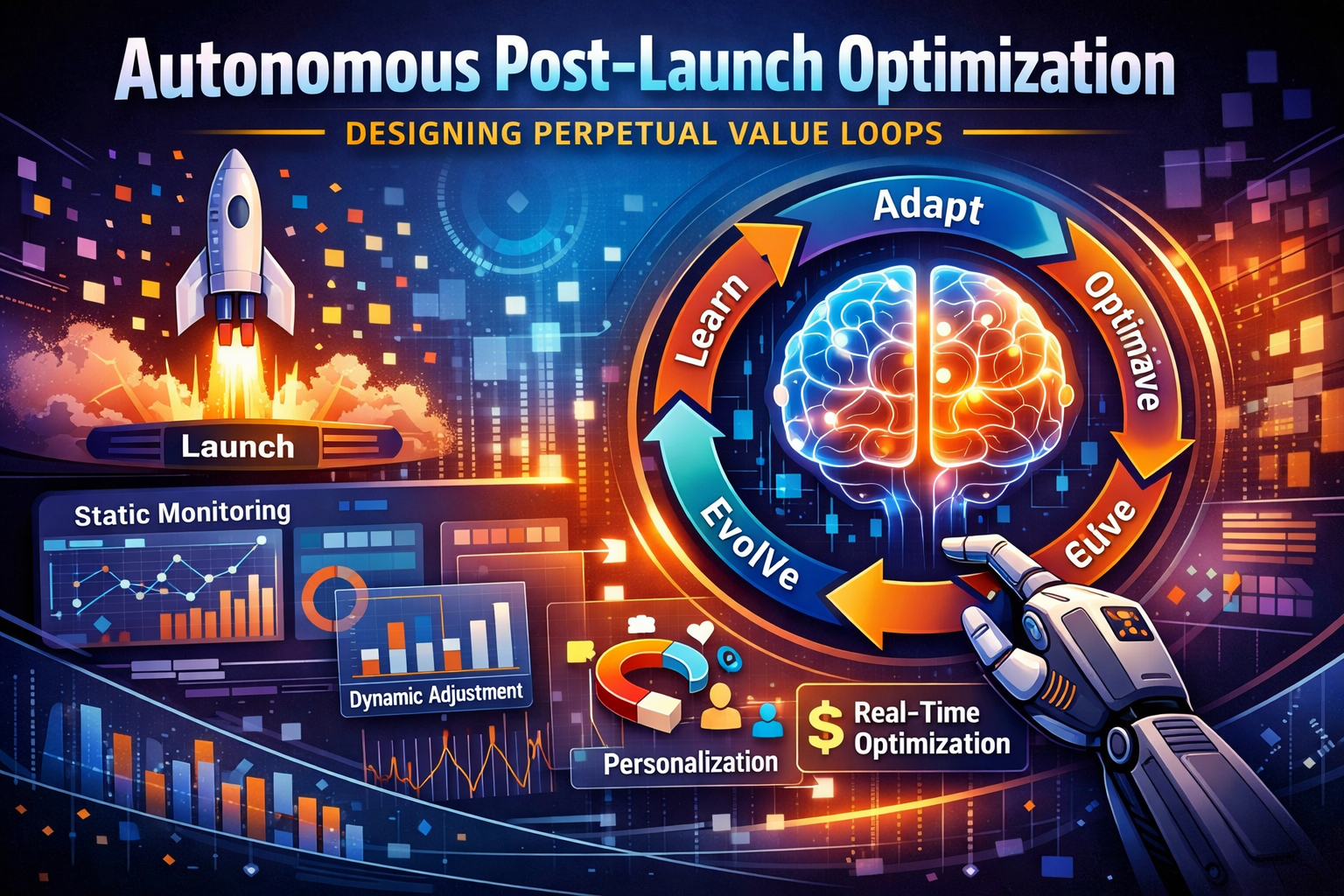

But in an agentic world, launch is not completion.

It is activation.

Traditional product thinking treats launch as milestone.

Agentic product thinking treats launch as trigger.

Trigger for learning.

Trigger for adaptation.

Trigger for optimization.

1.1 Launch as Milestone vs Launch as Trigger

In legacy models, shipping marks success.

Metrics are reviewed weekly.

Dashboards display numbers.

Teams observe performance.

Observation alone does not improve outcomes.

Agentic systems act.

They adjust onboarding flows.

They personalize recommendations.

They tune pricing dynamically.

Launch activates the loop.

The system begins to learn at scale.

1.2 Static Monitoring vs Dynamic Adaptation

Dashboards provide visibility.

But visibility without action is passive.

Traditional monitoring detects issues.

Manual decisions follow.

Time lag creates decay.

Agentic optimization reduces lag.

Signals trigger adjustment automatically.

Engagement drops.

Notifications adjust.

Conversion slows.

Offers recalibrate.

The system responds without waiting for meetings.

1.3 The Hidden Cost of Post-Launch Neglect

Every product decays without refinement.

User expectations rise.

Competitors evolve.

If no adaptive loop exists, engagement weakens gradually.

Consider Netflix.

Its personalization system never pauses.

Or Amazon.

Experimentation continues after release.

Continuous optimization prevents decay.

Launch is not the finish line.

It is the beginning of compounding intelligence.

2. From Monitoring to Autonomous Optimization

Monitoring measures performance.

It observes outcomes.

It reports deviations.

It informs analysis.

Optimization changes performance.

It intervenes.

It reallocates.

It adjusts system variables in pursuit of better outcomes.

Traditional digital products stop at monitoring.

Dashboards surface trends.

Teams debate insights.

Manual interventions follow.

Agentic products move beyond passive observation.

They transition from measurement to intervention.

They do not merely describe performance.

They modify it.

The system becomes an active participant in value creation.

2.1 Telemetry as Fuel

Optimization requires data.

Not summary reports.

Not lagging indicators alone.

High-resolution telemetry captures micro-behaviors.

Scroll depth.

Click timing.

Session frequency.

Feature dwell time.

Navigation pathways.

Abandonment points.

These granular signals reveal behavioral intent.

They surface friction before churn materializes.

They expose engagement shifts before revenue declines.

Granular signals enable precision.

The system can detect marginal lift opportunities.

It can personalize at segment or even individual level.

It can experiment with micro-adjustments instead of sweeping changes.

Low-resolution metrics blur opportunity.

Aggregate averages conceal edge cases.

Monthly summaries delay response.

Vanity metrics distort prioritization.

Telemetry fuels the optimization engine.

Without continuous signal ingestion, autonomy degrades into guesswork.

In agentic systems, data is not an output of the product.

It is an input to continuous evolution.

2.2 Evolution of Optimization

Optimization evolved in stages.

The first stage was rule-based.

“If metric drops below threshold, send alert.”

“If conversion declines, notify team.”

Humans remained the decision-makers.

The second stage was predictive.

Forecast churn probability.

Estimate lifetime value.

Anticipate demand fluctuations.

Prediction improved foresight, but action remained manual.

The third stage is agentic.

Predict.

Act.

Learn.

Adjust.

The system not only forecasts churn risk — it triggers retention interventions.

It not only detects engagement shifts — it modifies content sequencing.

It not only anticipates demand — it adjusts pricing or exposure dynamically.

The loop closes automatically.

Observation feeds prediction.

Prediction informs action.

Action generates new data.

New data refines the model.

Optimization becomes self-reinforcing.

Human oversight remains strategic.

But operational intervention becomes autonomous.

2.3 Decision Thresholds

Autonomy requires boundaries.

Not all actions should be automatic.

Unconstrained intervention can destabilize user experience, revenue predictability, or brand perception.

Confidence thresholds define autonomy.

High-confidence projections — supported by strong data density and low variance — allow automatic adjustment.

Low-confidence scenarios require human review.

For example:

Minor UI personalization may auto-adjust at high confidence.

Pricing experiments with significant revenue impact may require approval.

Thresholds can incorporate:

- Prediction confidence score.

- Risk weighting.

- Customer segment sensitivity.

- Revenue exposure magnitude.

Boundaries maintain stability.

They prevent oscillation.

They reduce unintended consequences.

They preserve strategic coherence.

Autonomy operates within defined guardrails.

Without thresholds, automation becomes volatility.

With thresholds, automation becomes disciplined acceleration.

2.4 Feedback Velocity and Compounding Advantage

Speed amplifies learning.

Faster feedback loops compound advantage.

If optimization occurs quarterly, improvement is incremental.

If optimization occurs weekly, learning accelerates.

If optimization occurs hourly, adaptation compounds.

Small gains accumulate rapidly.

A 0.5% engagement lift compounded daily outperforms sporadic 5% jumps.

Continuous micro-adjustments outperform occasional large pivots.

Companies that act faster than they measure outpace competitors.

But the true leverage lies in closing the loop:

- Measure faster.

- Decide faster.

- Act faster.

- Learn faster.

Feedback velocity becomes a strategic moat.

Competitors may replicate features.

They cannot easily replicate learning speed embedded in autonomous systems.

In agentic markets, advantage is not static differentiation.

It is dynamic adaptation.

The organization that optimizes continuously compounds intelligence over time.

And compounding intelligence becomes defensible advantage.

3. Designing Engagement Loops

Engagement does not happen randomly.

It is engineered.User behavior follows patterns.

Attention follows incentives.

Habits form through repetition and reinforcement.

Agentic products do not wait for engagement to occur.

They design for it deliberately.

They architect behavioral pathways that increase value exchange between user and system.

Well-designed engagement loops transform occasional interaction into sustained participation.

3.1 Trigger → Action → Reward → Reinforcement

Every engagement loop follows a behavioral structure.

Trigger invites action.

Action produces reward.

Reward reinforces habit.

Triggers can be internal (boredom, curiosity, need) or external (notification, email, recommendation).

Action must be friction-light.

Reward must be meaningful.

Reinforcement must create anticipation for the next cycle.

Agentic systems personalize triggers dynamically.

Timing adjusts based on usage patterns.

Content adapts to preference clusters.

Channel selection shifts according to response likelihood.

If a user engages more through push notifications than email, distribution prioritizes push.

If responsiveness declines, trigger frequency recalibrates.

Reinforcement strengthens retention by increasing perceived value per interaction.

Over time, loops become self-sustaining.

The product becomes integrated into behavioral routine.

Engagement engineering, when done responsibly, builds durable usage patterns rather than sporadic spikes.

3.2 Personalized Trigger Systems

Generic notifications create fatigue.

Batch messaging ignores behavioral diversity.

When every user receives the same prompt at the same time, relevance declines.

Personalized triggers create contextual alignment.

If a user is most active at night, timing shifts accordingly.

If engagement peaks during commute hours, triggers adapt.

If responsiveness declines, cadence decreases.

Content personalization enhances resonance.

If a user responds to educational material, prioritize educational prompts.

If entertainment drives interaction, shift content mix accordingly.

If transactional behavior dominates, surface relevant offers.

Personalization increases signal-to-noise ratio.

Fewer interruptions.

Higher relevance.

Greater conversion probability.

Agentic systems continuously refine trigger logic through reinforcement learning.

They test cadence.

They test tone.

They test channel mix.

Over time, the system learns not just what to send — but when and how to send it.

Precision replaces volume.

3.3 Behavioral Micro-Adjustments

Behavior is sensitive to subtle cues.

Button placement influences click probability.

Color contrast affects visibility.

Content order shapes attention hierarchy.

Default settings alter decision paths.

Small interface changes can shift behavior meaningfully.

Agentic systems test micro-adjustments continuously.

A/B testing evolves into multi-variant optimization.

Interface components adapt based on segment-level response.

Layout prioritization shifts based on engagement density.

Performance improves incrementally.

A slight improvement in click-through rate.

A marginal reduction in friction.

A modest increase in session duration.

Micro gains compound over time.

When optimization occurs at scale and frequency, incremental lifts accumulate into significant advantage.

Micro-adjustments do not disrupt user experience.

They refine it.

Continuous refinement transforms design from static artifact to adaptive interface.

3.4 Ethical Engagement vs Manipulation

Engagement design must respect autonomy.

There is a boundary between persuasion and exploitation.

Dark patterns inflate short-term metrics.

Artificial urgency.

Hidden unsubscribe paths.

Obscured pricing structures.

Such tactics may increase clicks temporarily.

They erode trust over time.

Guardrails ensure ethical boundaries remain intact.

Agentic systems must embed constraints:

- Maximum notification frequency.

- Clear opt-out pathways.

- Transparent algorithmic logic where appropriate.

Optimization must align with brand values.

Short-term metric maximization cannot override long-term trust preservation.

Consider Spotify.

Playlist recommendations increase listening time and engagement.

However, users retain control over playback.

Content diversity prevents filter bubbles from becoming restrictive.

Transparency around personalization reinforces trust.

Ethical engagement sustains loyalty.

Sustained loyalty generates durable growth.

In agentic systems, engagement is powerful.

Responsible engagement is strategic.

4. Retention Intelligence Systems

Retention is the backbone of value.

Acquisition attracts attention.

Retention builds business.

Acquisition fills the funnel.

Retention compounds lifetime value.

In agentic environments, retention cannot rely on periodic analysis alone.

It requires continuous sensing, predictive modeling, and automated intervention.

Retention intelligence transforms churn from a retrospective metric into a preventable event.

4.1 Churn Probability Modeling

Machine learning models estimate churn likelihood at the user level.

They analyze declining usage patterns.

Reduced session frequency.

Shortened session duration.

Feature abandonment signals.

Drop-offs in transaction activity.

Models assign dynamic risk scores to each user.

These scores update as new behavioral data arrives.

Risk segmentation becomes granular.

High-risk users surface immediately.

Moderate-risk cohorts receive monitoring.

Low-risk users remain in steady-state engagement flows.

Churn modeling also identifies contributing factors.

Was the decline driven by onboarding friction?

Feature irrelevance?

Pricing sensitivity?

External competition?

Predictive models do not merely classify risk.

They diagnose it.

This diagnostic capability enables targeted mitigation rather than blanket retention campaigns.

Retention intelligence begins with probabilistic foresight.

4.2 Early Warning Signals

Churn rarely occurs abruptly.

Weak signals precede disengagement.

Delayed logins.

Skipped sessions.

Reduced interaction depth.

Lower response to notifications.

Declining feature exploration.

Individually, these signals may appear insignificant.

Collectively, they indicate behavioral drift.

Agentic systems detect pattern convergence early.

They monitor deviation from baseline engagement trajectories.

They compare individual behavior against cohort norms.

They identify acceleration in disengagement velocity.

Early detection shifts retention strategy from reactive to preventive.

Intervening before disengagement stabilizes increases recovery probability.

The earlier the signal, the lower the cost of intervention.

Retention intelligence compresses detection latency.

That compression directly reduces revenue leakage.

4.3 Proactive Intervention Strategies

When churn risk rises beyond threshold, the system acts.

Offer a contextual incentive.

Provide an educational tutorial.

Surface a feature the user has not discovered.

Adjust onboarding reminders.

Personalize content recommendations.

Interventions must match context.

A price-sensitive user requires different treatment than a disengaged explorer.

A novice user may need education.

An advanced user may need new capability exposure.

Generic incentives waste margin.

Discounts without behavioral targeting reduce profitability without improving retention sustainably.

Targeted interventions maximize effect.

They align solution to root cause.

Agentic systems can test intervention variants automatically.

Which message tone performs best?

Which incentive type yields highest reactivation?

Which timing window increases response rate?

Intervention effectiveness feeds back into the model.

The system learns which levers move which segments.

Retention becomes a closed-loop intelligence system — not a campaign calendar.

4.4 Longitudinal Cohort Learning

User cohorts evolve over time.

Early adopters behave differently from mainstream users.

Long-term customers differ from new signups.

Enterprise users differ from individual consumers.

Retention systems analyze cohort behavior longitudinally.

They track retention curves across lifecycle stages.

They compare activation patterns across acquisition channels.

They observe behavioral maturation.

Cohort analysis surfaces structural insights:

Which features correlate with long-term retention?

Which onboarding flows produce durable engagement?

Which segments exhibit natural churn inflection points?

Insights refine strategy continuously.

Onboarding design improves.

Feature prioritization adjusts.

Pricing experiments recalibrate.

Retention intelligence equals prediction plus action.

But sustained advantage emerges from learning over time.

The more cohorts observed, the stronger the model.

The stronger the model, the earlier the intervention.

In agentic systems, retention is not defended episodically.

It is engineered continuously.

5. Revenue Optimization Engines

Revenue optimization requires precision.

Small pricing errors compound across scale.

Misaligned offers dilute margin.

Untargeted discounts erode profitability.

Agentic systems personalize monetization in real time.

They align pricing, packaging, and promotion to behavioral signals.

Revenue becomes dynamically tuned rather than periodically revised.

Optimization shifts from static price lists to adaptive monetization engines.

5.1 Dynamic Pricing Models

Pricing adjusts to demand conditions.

It responds to supply fluctuations.

It reacts to competitive pressure.

It reflects contextual urgency.

Consider Uber.

Surge pricing balances supply and demand dynamically.

When demand outpaces available drivers, prices increase to restore equilibrium.

When supply exceeds demand, prices normalize to stimulate activity.

Dynamic pricing increases market efficiency.

It reduces wait times.

It optimizes asset utilization.

It aligns willingness to pay with resource availability.

Agentic pricing models extend this concept beyond logistics.

They adjust subscription tiers based on usage intensity.

They experiment with packaging structures.

They modulate promotional exposure.

However, dynamic pricing must incorporate fairness thresholds.

Excessive volatility undermines perceived stability.

Predictive models should incorporate elasticity constraints and trust sensitivity indicators.

Revenue optimization is not merely about maximizing price.

It is about maximizing sustainable value exchange.

5.2 Offer Personalization

Uniform discounting is economically inefficient.

Not every customer requires incentive.

Discount offers should vary by user value profile.

High-value customers receive tailored benefits that reinforce loyalty — exclusive features, premium support, or loyalty rewards.

Low-engagement users receive reactivation incentives calibrated to regain activity without unnecessary margin sacrifice.

Medium-value users may receive cross-sell recommendations rather than price reductions.

Agentic systems evaluate:

- Lifetime value projections.

- Churn probability scores.

- Engagement intensity.

- Historical discount responsiveness.

Personalization maximizes margin by targeting incentives where marginal return is highest.

It prevents over-subsidization of already-loyal users.

It avoids under-investment in high-potential segments.

Offer optimization becomes a portfolio problem.

Capital (in the form of discounts or benefits) is deployed where return probability justifies cost.

This converts promotional strategy from mass broadcasting to micro-allocation.

5.3 Elasticity Detection

Revenue engines must understand demand elasticity.

Agentic systems estimate price sensitivity continuously.

They test small price variations across segments.

They observe conversion deltas.

They analyze churn response to price adjustments.

Minor price changes reveal underlying demand curves.

Elasticity detection informs pricing boundaries.

If demand remains stable despite price increases, headroom exists.

If small increases trigger sharp churn, sensitivity is high.

Elasticity is rarely uniform.

Enterprise customers may tolerate premium pricing.

Price-sensitive segments may require tiered structures.

Emerging markets may respond differently from mature ones.

Dynamic elasticity modeling enables segmentation-specific pricing strategies.

It also informs bundling logic.

A feature that exhibits high standalone elasticity may perform better within a bundle.

Revenue optimization becomes data-driven experimentation.

Over time, elasticity maps become competitive advantage.

They reveal monetization leverage points invisible to competitors relying on static pricing.

5.4 Revenue vs Trust Trade-Offs

Revenue optimization without restraint damages brand equity.

Aggressive upselling.

Hidden fees.

Confusing pricing tiers.

These tactics increase short-term revenue but erode long-term trust.

Trust is an economic asset.

It reduces churn.

It lowers customer acquisition cost.

It increases lifetime value.

Revenue engines must therefore incorporate trust sensitivity signals.

Customer sentiment analysis.

Complaint frequency.

Refund rates.

Subscription downgrade patterns.

Transparency preserves trust.

Clear pricing communication.

Explicit value explanation.

Predictable billing structures.

Agentic monetization must operate within ethical and reputational guardrails.

Short-term maximization cannot override long-term relationship economics.

Balance matters.

Revenue growth should reinforce loyalty, not undermine it.

In agentic systems, the most powerful monetization strategy is sustainable monetization.

Precision drives profit.

Trust sustains it.

6. Reinforcement Learning in UX and Pricing

Reinforcement learning formalizes adaptation.

It converts experimentation into structured learning.

Actions generate reward signals.

Reward signals influence future action selection.

Over time, the system converges toward policies that maximize defined objectives.

Unlike static rule engines, reinforcement learning (RL) operates through interaction.

The system tests.

The environment responds.

The policy updates.

In UX and pricing contexts, this creates continuously optimizing decision frameworks.

But power without design discipline creates distortion.

6.1 Reward Function Design

Everything begins with the reward function.

Define what success means.

Engagement.

Revenue.

Retention.

Customer satisfaction.

Margin contribution.

Reward functions encode strategic intent mathematically.

If engagement alone is rewarded, clickbait patterns may emerge.

If revenue alone is optimized, pricing aggressiveness may increase unsustainably.

If retention alone is prioritized, growth experimentation may stagnate.

The reward function becomes the moral and strategic compass of the system.

It translates executive priorities into optimization targets.

Multi-objective reward functions often perform better.

For example:

Revenue weighted by churn probability.

Engagement weighted by satisfaction scores.

Pricing uplift constrained by complaint rates.

Poor reward design creates distortion.

The system will optimize exactly what is encoded — not what is implied.

Reward clarity prevents unintended consequences.

In agentic systems, governance begins at the reward function level.

6.2 Exploration vs Exploitation

Reinforcement learning must balance exploration and exploitation.

Exploration tests new strategies.

New interface variations.

Alternative pricing tiers.

Different recommendation sequences.

Exploitation leverages known winners.

Scaling high-performing UX flows.

Expanding validated pricing strategies.

Increasing exposure to proven conversion paths.

Balance is essential.

Too much exploration creates instability and user confusion.

Too little exploration prevents discovery of superior policies.

Agentic systems manage this balance dynamically.

Early in lifecycle stages, exploration weight increases.

As certainty improves, exploitation dominates.

Algorithms such as multi-armed bandits or contextual bandits allocate traffic proportionally to performance confidence.

Uncertainty guides experimentation intensity.

This structured balance enables continuous learning without sacrificing stability.

6.3 Safe Reinforcement Patterns

Autonomous adaptation must operate within safety constraints.

Staged rollout protects users from systemic error.

Test on small segments first.

Validate reward accuracy.

Monitor unintended behavior.

Scale gradually as confidence increases.

Safety mechanisms include:

- Anomaly detection triggers.

- Performance guardrails.

- Rollback protocols.

- Human-in-the-loop escalation thresholds.

If revenue spikes but churn simultaneously increases, intervention is required.

If UX optimization increases click rate but decreases task completion, distortion is detected.

Safety reduces systemic risk.

It prevents runaway optimization that harms users or brand equity.

Reinforcement systems must incorporate both performance maximization and constraint satisfaction.

Optimization without boundaries is volatility.

Safe reinforcement sustains trust while enabling progress.

6.4 Continuous Policy Updating

Policies are not static artifacts.

They evolve with data.

User behavior shifts.

Market conditions change.

Competitive pressures fluctuate.

Reinforcement models retrain periodically or continuously depending on signal velocity.

New data refines value estimation.

State representations improve.

Action selection becomes more precise.

Learning never stops.

Each interaction enriches the policy landscape.

However, strategic intent remains anchor.

Reward functions remain aligned with enterprise objectives.

Ethical guardrails remain constant.

Risk thresholds remain defined.

Behavior adapts continuously.

Strategy provides direction.

Reinforcement learning provides acceleration.

In agentic UX and pricing systems, adaptation is not occasional recalibration.

It is embedded evolution — guided by intent, constrained by governance, and fueled by interaction.

7. Experimentation at Scale

Experimentation is the engine of learning.

Without controlled experimentation, optimization devolves into speculation.

Traditional A/B testing compares two variants.

Variant A versus Variant B.

Control versus treatment.

Manual analysis after statistical significance.

This model works — but it is limited.

Agentic experimentation expands scale, speed, and autonomy.

Experiments become continuous rather than episodic.

Learning becomes embedded in the product lifecycle.

7.1 Multi-Variant Testing

Binary testing restricts exploration bandwidth.

Testing multiple variations simultaneously increases search efficiency.

Different headlines.

Different layouts.

Different pricing bundles.

Different onboarding flows.

Multi-variant testing evaluates configuration combinations in parallel.

This accelerates convergence toward optimal patterns.

Agentic systems can allocate traffic dynamically across variants based on real-time performance.

Underperforming variants receive less exposure.

Promising variants receive more.

This reduces time to insight.

It also uncovers interaction effects.

Sometimes Variant C performs best only when combined with Layout D.

Multi-dimensional testing reveals such dependencies.

Optimization becomes combinatorial rather than linear.

Faster convergence produces faster compounding gains.

7.2 Sequential Testing

Traditional experiments run to completion before evaluation.

Sequential testing introduces adaptive adjustment.

Adjust experiments mid-cycle.

Stop underperforming variants early to conserve traffic and reduce opportunity cost.

Increase exposure to emerging winners before formal conclusion.

This reduces wasted learning cycles.

Sequential methodologies — such as Bayesian updating or adaptive bandit strategies — refine probability estimates continuously.

Statistical confidence evolves in real time.

Agentic systems can rebalance exposure automatically as evidence strengthens.

This shortens feedback loops.

It increases capital efficiency.

It reduces the latency between insight and impact.

Experimentation becomes fluid rather than fixed-duration.

7.3 Automated Hypothesis Generation

Experimentation often stalls due to idea scarcity.

Human teams generate hypotheses based on observation and intuition.

Agentic systems expand hypothesis generation capacity.

AI detects friction points in user journeys.

Identifies anomalous drop-off clusters.

Surfaces engagement discontinuities.

Based on these signals, it proposes alternatives.

Change CTA placement.

Simplify onboarding steps.

Alter pricing framing.

Adjust content sequencing.

Discovery feeds experimentation automatically.

Patterns become prompts for test creation.

Instead of waiting for quarterly planning cycles, hypothesis generation becomes continuous.

This transforms experimentation from reactive to proactive.

The system does not merely validate ideas.

It produces them.

7.4 Experiment Portfolio Management

At scale, experimentation requires orchestration.

Too many concurrent tests create signal interference.

Too few limit learning velocity.

Experiment portfolio management structures the pipeline.

Balance incremental improvements with breakthrough experiments.

Incremental tests refine conversion flows.

Breakthrough experiments explore new product mechanics.

Risk exposure must be diversified.

Low-risk optimization tests sustain steady gains.

High-risk strategic experiments explore asymmetric upside.

Portfolio-level dashboards track:

Active experiments.

Confidence levels.

Resource allocation.

Cumulative impact contribution.

Consider the experimentation culture at Netflix.

Personalization refinement, interface evolution, and recommendation tuning are driven by continuous testing.

Experimentation is not an initiative.

It is infrastructure.

Learning becomes perpetual.

In agentic systems, experimentation is the operating system of growth.

8. Guardrails for Autonomous Optimization

Autonomy without governance invites systemic risk.

The faster a system adapts, the faster it can amplify error.

Optimization engines operate at scale.

Scale magnifies both success and failure.

Boundaries therefore are not constraints on innovation.

They are structural protections.

Optimization must respect clearly defined limits.

Guardrails convert autonomy from uncontrolled acceleration into disciplined evolution.

8.1 Ethical Constraints

Ethical design must be codified, not implied.

Prohibit manipulative tactics explicitly.

No hidden subscription traps.

No deceptive urgency timers.

No dark pattern interface flows.

Protect vulnerable users.

Age-sensitive segments.

Financially at-risk customers.

Users exhibiting addictive behavior patterns.

Agentic systems must embed vulnerability detection logic where appropriate.

Define acceptable experimentation boundaries.

What metrics may be optimized?

What behaviors are off-limits?

What psychological levers are unacceptable?

Reward functions should penalize exploitative outcomes.

Ethical constraints must be integrated at model design, not appended afterward.

Optimization that violates ethical boundaries may increase short-term performance.

It destroys long-term legitimacy.

Responsible autonomy preserves durable value.

8.2 Brand Safeguards

Brand is a strategic asset.

Autonomous systems must reinforce, not dilute, identity.

Ensure tone remains consistent across personalization variants.

Dynamic messaging should adapt content, not character.

Avoid intrusive notifications.

Frequency caps protect user experience.

Channel discipline prevents overexposure.

Context awareness reduces interruption friction.

Preserve brand identity across experiments.

A pricing test should not contradict positioning.

A UX optimization should not conflict with brand aesthetics.

A monetization experiment should not erode perceived fairness.

Brand safeguard parameters can be encoded as constraints within experimentation systems.

Certain colors, tones, or messaging patterns may be restricted.

Certain pricing volatility thresholds may be capped.

Optimization must operate inside brand architecture.

Sustained trust is more valuable than marginal metric lift.

8.3 Regulatory Compliance

Regulatory environments vary by jurisdiction.

Pricing transparency laws differ by region.

Consumer protection requirements vary across markets.

Data usage must comply with evolving privacy frameworks.

Agentic optimization must integrate compliance checks directly into system logic.

Before deploying pricing experiments, verify disclosure compliance.

Before personalizing offers, validate consent scope.

Before storing telemetry, ensure lawful basis.

Compliance cannot rely solely on manual review.

Automated validation rules must operate in parallel with optimization engines.

If a proposed action violates regulatory constraints, execution should be blocked automatically.

Regional segmentation may be required.

An experiment allowed in one geography may be prohibited in another.

Embedding compliance into decision logic reduces legal exposure and reputational damage.

Governed autonomy prevents reactive crisis management.

8.4 Human Override Protocols

Even well-designed systems encounter anomalies.

Unexpected feedback loops.

Model drift.

External shocks.

Escalation pathways protect stability.

If anomaly thresholds are exceeded, pause optimization automatically.

If revenue spikes coincide with churn surge, trigger review.

If engagement rises but complaint volume increases, flag investigation.

Human override protocols must be explicit.

Who is notified?

What authority level can pause systems?

What rollback mechanisms exist?

Rapid intervention prevents cascading damage.

Once review is complete, human oversight restores calibrated balance.

Autonomy should be reversible.

Optimization engines must allow manual control when strategic judgment requires intervention.

Governance strengthens autonomy.

It builds executive confidence.

It protects long-term value.

It ensures adaptation operates within intentional design.

Autonomy is powerful.

Guarded autonomy is sustainable.

9. Case Study: Continuous Optimization in Practice

Theory becomes credible when it manifests at scale.

Continuous optimization is not an abstract framework.

It operates inside the world’s most sophisticated digital platforms.

Consider Amazon.

Recommendation systems adjust constantly.

User behavior informs ranking in real time.

Click-through patterns influence product ordering.

Purchase history refines relevance weighting.

Search queries recalibrate intent modeling.

Algorithms continuously re-rank products based on predicted conversion probability.

Pricing experiments test elasticity.

Small price variations across segments reveal demand sensitivity.

Promotional structures adapt based on observed response curves.

Inventory levels influence dynamic adjustments.

Feedback loops refine continuously.

Each interaction produces telemetry.

Telemetry updates predictive models.

Updated models adjust exposure and pricing logic.

Governance ensures compliance.

Regional pricing transparency rules are respected.

Consumer protection constraints are embedded in logic.

Experimentation operates within defined risk thresholds.

The architecture integrates engagement, retention, and revenue loops.

Engagement increases exposure.

Retention increases lifetime value.

Revenue optimization maximizes margin per interaction.

Each loop reinforces the others.

Optimization is not a feature.

It is infrastructure.

Or consider Netflix.

Personalization improves over time.

Thumbnail images are dynamically selected per user.

Content rows reorder based on predicted interest.

Recommendations adapt to viewing context and device.

Completion rates guide recommendation refinement.

If users abandon certain genres mid-episode, weighting shifts.

If binge behavior increases for a category, exposure expands.

If new releases accelerate engagement, ranking logic adapts.

Experimentation permeates the interface.

Artwork variations.

Autoplay behavior.

Content sequencing strategies.

Optimization never stops.

There is no final state.

Launch activates continuous adaptation.

Each release feeds a new learning cycle.

Compounding advantage emerges gradually.

More data improves prediction accuracy.

Better predictions improve engagement.

Improved engagement produces richer data.

Over time, the feedback loop strengthens.

Competitors may replicate features.

Replicating accumulated learning is far more difficult.

Continuous optimization transforms iteration speed into durable advantage.

10. The Product Leader as Performance Architect

The role evolves again.

The environment has changed.

The tooling has changed.

The velocity has changed.

Old PM shipped features.

Roadmap execution defined success.

Delivery cadence signaled competence.

Feature completeness marked progress.

New PM designs loops.

Engagement loops.

Retention loops.

Revenue loops.

Learning loops.

The unit of design is the feedback system.

Old PM reported metrics.

Dashboards summarized outcomes.

Post-launch reviews diagnosed performance.

Quarterly business reviews explained variance.

New PM supervises optimization engines.

They monitor live model behavior.

They interpret signal shifts.

They recalibrate strategic weightings.

They oversee experimentation velocity.

Old PM measured after launch.

Launch was milestone.

Learning was retrospective.

Iteration was periodic.

New PM encodes strategy into reward functions.

Strategic priorities translate into optimization objectives.

Growth targets become weighted parameters.

Risk tolerance becomes constraint logic.

Brand values become guardrails in system design.

Strategy is not narrated.

It is embedded.

Core competencies expand.

Data literacy becomes foundational.

The modern product leader must interpret probability distributions, confidence intervals, and causal inference signals.

Understanding behavioral economics enhances engagement design.

Incentive structures, cognitive bias, and habit formation inform loop architecture.

Governance fluency protects trust.

Regulatory awareness, ethical design, and risk modeling become daily considerations.

Financial modeling aligns optimization with profitability.

Expected value calculations.

Elasticity modeling.

Contribution margin analysis.

Capital allocation logic.

The product leader operates at the intersection of economics, psychology, data science, and strategy.

Execution remains important.

But orchestration becomes decisive.

The product leader becomes performance architect.

They design systems that learn.

Systems that adapt.

Systems that self-correct.

Systems that compound value over time.

Their success is not measured by shipped features.

It is measured by sustained performance acceleration.

In agentic environments, leadership is about architecting intelligence.

Conclusion

Launch once marked completion.

It was the finish line.

A milestone to celebrate.

A signal that execution had concluded.

In an agentic era, launch activates intelligence.

Deployment is not an endpoint.

It is the beginning of live learning.

The system transitions from design-time assumptions to runtime adaptation.

Optimization becomes perpetual.

There is no steady state.

No final configuration.

No permanent “best version.”

Engagement loops adapt.

Triggers recalibrate.

Content sequences evolve.

Interface elements refine continuously.

Retention systems predict and intervene.

Risk signals surface early.

Personalized responses activate automatically.

Churn becomes manageable rather than inevitable.

Revenue engines personalize.

Pricing aligns with elasticity.

Offers reflect lifetime value projections.

Monetization respects both margin and trust.

Reinforcement learning encodes strategy.

Reward functions translate executive intent into mathematical incentives.

Policies evolve as new data arrives.

Behavior shifts in response to structured feedback.

Experimentation accelerates insight.

Hypotheses generate continuously.

Variants deploy dynamically.

Learning compounds without waiting for planning cycles.

Governance maintains trust.

Ethical guardrails constrain excess.

Compliance logic protects reputation.

Human oversight preserves strategic coherence.

Continuous optimization separates leaders from laggards.

Leaders compound intelligence.

Laggards accumulate technical debt and assumption decay.

Static products decay.

They drift from market relevance.

They respond too slowly to signal shifts.

They mistake stability for strength.

Adaptive products evolve.

They sense change.

They adjust proactively.

They integrate learning into architecture.

When autonomy governs post-launch, value compounds.

Each interaction enriches the model.

Each adjustment increases precision.

Each cycle strengthens defensibility.

Compounding is subtle at first.

Marginal gains appear incremental.

Over time, they become structural advantage.

And compounding value defines enduring dominance.

References & Further Reading

- Agentic AI: The Next Frontier in AI-Driven Customer Engagement

- AI and Data-Driven Innovation in Product Launch Strategies

- Product Recommendation with Price Personalization Using Reinforcement Learning

- Agentic AI in Product Management: A Co-Evolutionary Model

- Data-Driven Personalized Marketing Strategy Optimization

- Hyper-Personalization at Scale with Agentic AI

- Personalization Strategies in E-commerce Leveraging ML

- The Product Experimentation Playbook for AI PMs

- AI in Product Management: Use Cases and Applications

- Where AI Plays a Major Role in Product Development and Management

- Graph-Attentive MAPPO for Dynamic Retail Pricing

- Dynamic Retail Pricing via Q-Learning for Revenue Management

- Personalized Policy Learning through Discrete Experimentation

Disclaimer: This post provides general information and is not tailored to any specific individual or entity. It includes only publicly available information for general awareness purposes. Do not warrant that this post is free from errors or omissions. Views are personal.