Agent Runtime Environment (ARE) in Agentic AI — Part 3 - Memory Management

In the first part of this series, we defined the Agent Runtime Environment (ARE) as the "operating system" for autonomous intelligence. In Part 2, we explored the Execution Engine — the motor system that turns reasoning into action. Now we confront a central truth of agentic intelligence: if an agent cannot remember, it cannot meaningfully reason, plan, or act over time.

Memory management in agentic systems isn’t a “nice to have.” It’s the backbone of persistence, continuity, personalization, and reasoning. And it fundamentally distinguishes a stateless LLM wrapper from a true agentic AI system.

In this article, we will:

- Define what memory means in agentic AI.

- Explore its variants and architectural implications within an ARE.

- Explain practical patterns and implementation strategies.

- Highlight real-world challenges and emerging research.

What “Memory” Means in an Agent Runtime Environment

In cognitive systems, memory is the mechanism that allows an agent to bridge the gap between "now" and "then." For an Agentic AI running within an ARE, memory is far more than a passive database or a simple chat log; it is a first-class active resource that the runtime must allocate, optimize, and protect.

In a standard LLM interaction, "memory" is often confused with the "context window" — the limited amount of text the model can see right now. In an ARE, memory is the broader infrastructure that manages what goes into that window. It transforms a stateless model into a stateful entity capable of the following four critical functions:

Context Continuity

-

The Problem: LLMs are amnesiac by design. If an agent takes 50 steps to solve a complex coding problem, the initial instructions and early discoveries will eventually slide out of the context window.

-

The ARE Solution: The runtime maintains a "Thread State." It preserves the narrative arc of a task across steps, sessions, or even system restarts. This ensures that when an agent is on Step 45, it still "knows" why it took Step 1. It prevents the "drift" where agents lose track of the original goal after a long chain of reasoning.

Learning from Experience (Episodic Learning)

-

The Problem: Without persistent memory, an agent that fails a specific API call today will fail it again tomorrow in the exact same way. It cannot "learn" from its mistakes because its existence resets after every session.

-

The ARE Solution: The runtime implements a Feedback Loop. When an agent encounters an error and successfully corrects it, the ARE records this "Episode" (Action -> Error -> Correction). Future instances of the agent can query this episodic memory to avoid repeating known failures, effectively becoming smarter without the underlying model ever being retrained.

Personalization & User Modeling

-

The Problem: A generic agent treats a CTO and a Junior Developer exactly the same, explaining basic concepts to the expert or using jargon with the novice.

-

The ARE Solution: The runtime builds a Semantic Profile of the user or the organization. Over time, the ARE aggregates preferences ("User prefers concise JSON output," "User works in the EST time zone," "Project X relies on AWS"). This allows the agent to tailor its planning and communication style implicitly, anticipating needs rather than waiting for explicit instructions every time.

Persistent State for Long-Running Workflows

-

The Problem: Real-world tasks take time. A "Market Research Agent" might need to scrape data, wait 4 hours for a report to generate, and then analyze it. If the script crashes or the window closes during that wait, the progress is lost.

-

The ARE Solution: The runtime provides "Checkpointing." Much like a save game file, the ARE serializes the agent's entire mental state—its current plan, the variables it holds, and the next step in its queue—to durable storage. This allows agents to "sleep" while waiting for external events and "wake up" days later to resume exactly where they left off, enabling asynchronous, long-horizon autonomy.

Without this managed memory layer, even the most sophisticated model is just a reactive engine — brilliant in the moment but incapable of growth. The ARE’s memory system is what grants an agent temporal existence, allowing it to own a task from start to finish, regardless of how long that takes.

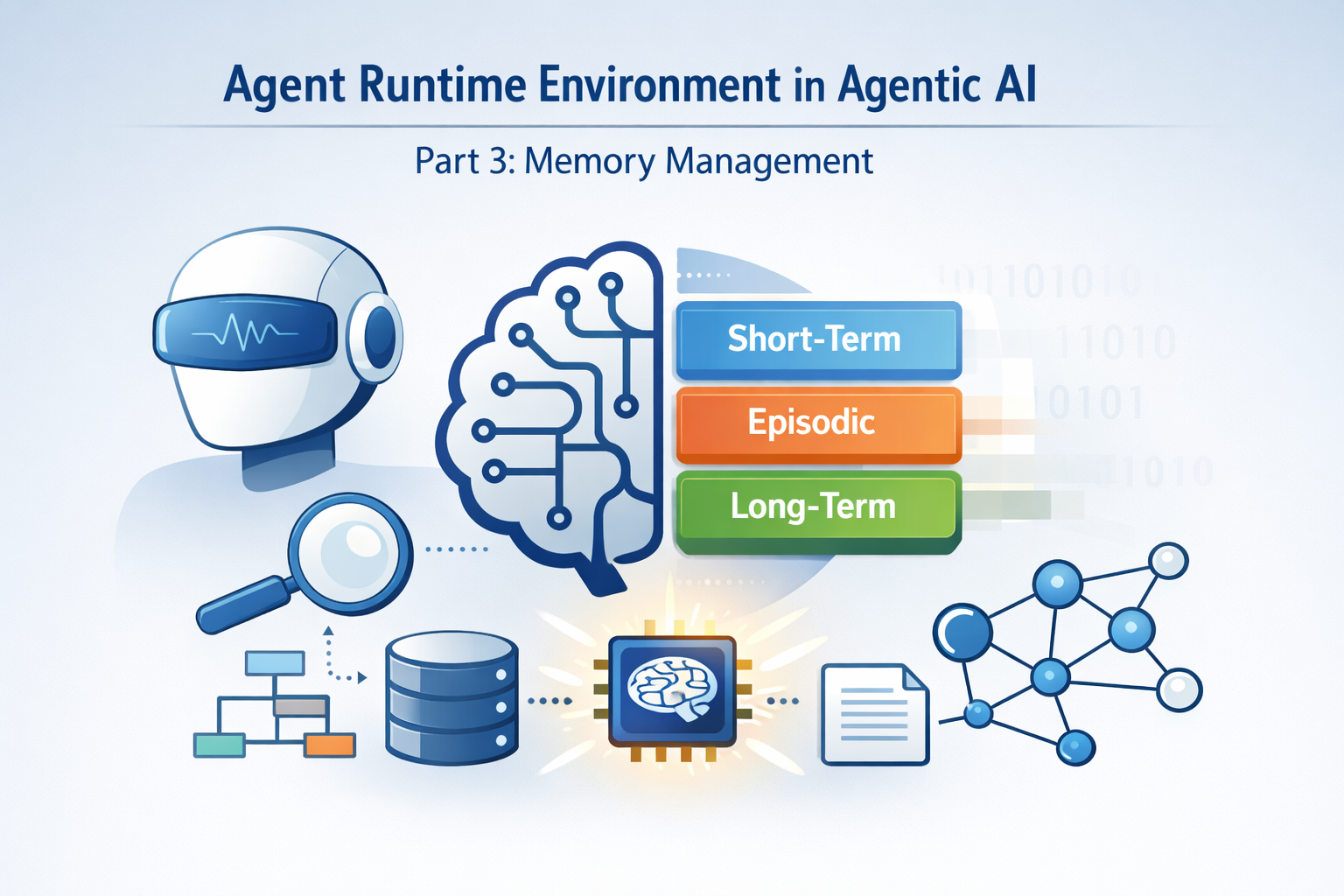

Memory Types and Their Roles

To replicate human-like reliability, a modern Agent Runtime Environment (ARE) cannot rely on a single "bucket" for information. Instead, it adopts a layered memory architecture inspired by human cognitive science but engineered for high-throughput computing.

In a robust ARE, memory is segmented not just by how long it lasts, but by what function it serves.

Short-Term Memory (Working Memory)

The "Scratchpad" of ReasoningShort-term memory is the volatile, high-speed context the agent uses to survive the "now." It lives entirely within the active session—holding the immediate conversation history, the output of the last tool call, and the temporary variables needed for the current reasoning step.

-

Where it lives: Inside the LLM’s active Context Window and the runtime’s ephemeral execution threads.

-

The ARE’s Role: The runtime acts as a ruthless editor here. Since context windows are expensive and finite (even with 1M+ token models), the ARE performs Context Hygiene. It actively summarizes older turns of the conversation, truncates massive error logs, and prioritizes the most relevant "scratchpad" notes to keep the agent focused without overflowing its cognitive buffer.

-

Why it matters: Without this, an agent is incapable of multi-turn reasoning. It would forget your instruction to "fix the bug" the moment it opened the file to look for it.

Episodic Memory

The "Autobiography" of ExperienceIf Short-Term memory is what the agent is thinking now, Episodic memory is what the agent did in the past. It stores specific, time-stamped experiences: the actions taken, the outcomes observed, and the decisions made during previous tasks.

-

Where it lives: Typically in a Vector Database (like Pinecone, Weaviate, or Milvus), where past "episodes" are embedded for semantic retrieval.

-

The ARE’s Role: The runtime enables Case-Based Reasoning. Before an agent attempts a complex task (e.g., "Deploy to Production"), the ARE queries Episodic Memory: "Have we done this before? What happened?" If a previous attempt failed due to a timeout, the agent "remembers" that failure and proactively adjusts its timeout settings this time.

-

Why it matters: This transforms an agent from a static script runner into a system that learns. A financial advisor agent, for instance, remembers not just that you invested, but why you made that decision last year, allowing it to offer advice that respects your historical rationale.

Semantic Memory

The "Library" of FactsWhile Episodic memory is about events ("I tried to compile this code yesterday"), Semantic memory is about facts ("Python 3.12 introduced this specific syntax"). This is the generalized knowledge base—domain rules, enterprise documentation, and grounded truths—that the agent references to make informed decisions.

-

Where it lives: In Knowledge Graphs (like Neo4j) or structured SQL/NoSQL databases, often augmented by RAG (Retrieval-Augmented Generation) pipelines.

-

The ARE’s Role: The runtime manages Grounding. When an agent hallucinates or is unsure, the ARE forces a lookup in Semantic Memory to verify facts. It anchors the agent's creativity in the reality of your enterprise data.

-

Why it matters: It separates "intelligence" from "knowledge." You can swap out the LLM (the brain), but the Semantic Memory (the education) remains intact, ensuring the agent remains an expert in your specific domain regardless of the underlying model.

Long-Term Memory (Persistent State)

The "Identity" and Strategic ContextDistinguished from the event-logs of episodic memory, this layer manages the enduring state of the world and the user. It is the repository for User Preferences ("The user prefers concise reports"), Project Histories ("This migration project is 60% complete"), and Procedural Knowledge (Standard Operating Procedures).

-

Where it lives: Across persistent storage systems—User Profile Databases, CRM integrations, and specialized "Memory Stores" (like Zep or MemGPT’s archival storage).

-

The ARE’s Role: The runtime handles Personalization and Continuity. It ensures that if an agent is paused today and resumed next week, it doesn't just remember the facts (Semantic) or the past events (Episodic); it remembers the strategy and the user's specific constraints.

-

Why it matters: This builds trust. An agent that remembers you prefer Python over Java, or that your risk tolerance is "low," feels like a partner. One that needs to be reminded of your preferences every Monday feels like a generic tool.

Memory Management Within the ARE: From Storage to Orchestration

Within an Agent Runtime Environment, memory management is not a passive background task; it is a high-stakes state orchestration problem. While an LLM provides the "thought," the ARE provides the "metabolism" — deciding what to keep, what to digest, and what to discard to keep the system running efficiently and accurately.

Storage and Retrieval: The Multimodal Data Layer

Memory must be encoded and indexed so that retrieval happens with sub-second latency and extreme relevance. Modern AREs leverage a hybrid approach:

-

Vector Databases (Semantic Lookup): Tools like FAISS or Chroma allow the agent to "feel" its way toward relevant memories based on meaning rather than exact keywords.

-

Graph Stores (Relational Context): Knowledge graphs (e.g., Neo4j) allow agents to understand complex relationships, such as "User A is the manager of Project B, which depends on Server C." This prevents the agent from making decisions that violate organizational structures.

-

Hybrid Querying: The most advanced AREs combine structured SQL (for precise facts like "What was the stock price?") with unstructured vector search (for "What was the general sentiment of the meeting?").

Lifecycle and Prioritization

Effective memory management isn't just about remembering; it's about curation. Every token stored in an agent's memory adds "noise" and cost. The ARE must manage three specific phases:

-

Tiered Retention: Inspired by systems like MemoryOS, agents use hierarchical tiers.

- Short-term: High-resolution logs of the last 5 minutes.

- Mid-term: Summarized "chapters" of the last hour.

- Long-term: Highly distilled "insights" or "rules" that last forever.

-

Memory Decay & Heat Mechanisms: Not all memories are equal. The ARE tracks "access frequency" (how often a memory is recalled). Memories that aren't "hit" frequently are eventually archived or deleted (decayed) to prevent Memory Bloat, which slows down retrieval.

Summarization & Consolidation: When a conversation gets too long, the ARE doesn't just cut the top off; it asks a secondary "Compressor Agent" to summarize the previous 50 turns into a few core bullet points, preserving the essence while freeing up the context window.

Integration with Execution and Context

Memory is the bridge between the agent's Planning and its Actions. In an ARE, memory is tightly coupled with the execution loop:

-

Prompt Construction: The ARE acts as a "Context Engineer." Before sending a prompt to the LLM, it dynamically injects the most relevant slices of memory (user preferences + past failures + domain facts) into the system message.

-

Stateful Recovery: If an agent fails mid-execution (e.g., a network timeout), the ARE uses its Checkpointing memory to restart the agent at the exact sub-step where it left off, rather than beginning the entire task from scratch.

-

Supervisor Coordination: In multi-agent patterns, the ARE manages "Shared Memory." A Supervisor Agent can write to a shared memory block that its Sub-agents can all read from, ensuring that the "worker" agents don't waste time re-discovering information the leader already knows.

Without the ARE's orchestration, memory updates can become "corrupted." If an agent updates a database but then crashes, the memory layer must be able to reconcile that incomplete update—much like a traditional database transaction—to ensure the agent's "worldview" remains consistent.

Practical Memory Patterns for Agentic AREs

Transitioning from theory to production requires specific, repeatable patterns. In a high-performance Agent Runtime Environment (ARE), these patterns ensure the agent remains responsive, cost-effective, and contextually accurate over hundreds of interactions.

Buffer + Summary MemoryThe

"Sliding Window" ApproachTo stay within token limits and manage costs, the ARE maintains a hybrid window of the conversation.

- The Buffer: The most recent $N$ messages are kept in their raw, high-fidelity form. This preserves immediate nuances, code snippets, or specific formatting instructions.

- The Summary: As messages exit the buffer, they are passed to a background

- Summarizer Agent. This agent distills the "essence" of the dialogue into a concise executive summary.

- Usage: The LLM receives [Current Summary] + [Recent Buffer]. This allows for infinite conversation length without hitting a "token wall."

Retrieval-Augmented Generation (RAG)

The "On-Demand" Fact-FinderRather than stuffing every piece of information into the prompt, the ARE uses a "just-in-time" delivery system.

- Mechanism: On every step of reasoning, the ARE takes the agent's current thought and queries a Vector Database (like Pinecone or Chroma) for semantically related memories.

- Benefit: This significantly reduces hallucinations. If an agent is asked about a user’s previous project, it retrieves the specific facts from RAG rather than trying to "guess" based on the patterns in its training data.

Hierarchical Memory Stores (H-MEM)

The "OS-Style" PagingInspired by computer architecture, this pattern organizes memory by access speed and abstraction:

- L1 (Short-term): Immediate task state and conversation window (Lives in RAM/Context).

- L2 (Mid-term): Detailed logs of recent sessions or sub-tasks (Lives in a fast cache like Redis).

- L3 (Long-term): Permanent user preferences and deep domain knowledge (Lives in a durable Vector or Graph DB).

- The ARE's Role: It acts as the Memory Controller, "paging" information between tiers as it becomes relevant to the current objective.

Memory Factoring with Scores / Relevance

The "Intelligent Pruning" MechanismNot all memories are equally valuable. Production AREs score memories based on a multi-factor formula to decide what to retrieve:

- Recency: Newer memories are often more relevant to current tasks.

- Importance: Decisions with high impact (e.g., "User changed their password") are weighted higher than trivial small talk.

- Frequency: If an agent recalls a fact often, its "heat" increases, keeping it in faster storage tiers.

- Semantic Relevance: How closely the memory matches the current query's intent.

Intelligent pruning via relevance scoring prevents "Context Pollution," where an agent gets distracted by old, irrelevant information that happens to share a few keywords with the current task.

Key Challenges in Memory Management

While the theoretical layers of memory are elegant, implementing them in a production-grade Agent Runtime Environment (ARE) introduces significant engineering friction. Moving beyond simple storage requires solving the trade-off between "perfect recall" and "system performance."

Token and Latency Constraints

The "Context Tax"Every memory retrieved and injected into a prompt has a cost—both in dollars (tokens) and in seconds (latency).

-

The Bottleneck: LLM context windows, while expanding, still penalize long inputs with slower "Time to First Token" (TTFT) and higher likelihood of "lost in the middle" reasoning errors.

-

The Strategy: High-performance AREs use KV (Key-Value) Cache Management. This allows the runtime to "freeze" certain parts of the context (like the agent's core instructions and long-term user preferences) so they don't have to be re-processed on every turn, drastically reducing latency.

Relevance vs. Redundancy

The "Noise" ProblemRetrieving too much is just as dangerous as retrieving nothing. If an agent is asked "What is the status of my order?", a naive RAG system might pull every conversation that mentions "order," overwhelming the agent with irrelevant history.

- The Solution: Semantic Re-ranking. The ARE performs an initial broad search (Vector) and then uses a smaller, faster model to "re-rank" the top results for specific relevance to the current sub-task. This ensures the agent's "working memory" contains only high-signal information.

Security, Privacy, and "The Right to be Forgotten"

The Governance ChallengeAgent memory often contains sensitive enterprise data or Personal Identifiable Information (PII).

-

Memory Poisoning: If an agent stores a malicious "memory" (e.g., from a prompt injection in a document it read), that "poison" can persist across sessions, permanently corrupting the agent's behavior.

-

Isolation: AREs must enforce Tenant-Level Isolation, ensuring that Memory A (User 1) never leaks into Memory B (User 2), even if they use the same underlying model.

-

Compliance: Regulations like GDPR require the ability to delete specific data. Because agent memory is often embedded as high-dimensional vectors, "forgetting" a specific fact without rebuilding the entire index is a complex technical hurdle.

Scalability: From Pipeline to Policy

The Evolution of MemoryAs an agent performs thousands of tasks, its memory grows unchecked. Traditionally, this was managed by static pipelines (e.g., "Delete anything older than 30 days").

-

AtomMem (Learnable Policy): Emerging research like AtomMem (2026) suggests that memory management should be a learnable skill. Instead of a developer writing rules for what to save, the agent uses Reinforcement Learning (RL) to develop a "policy" for its own CRUD (Create, Read, Update, Delete) operations.

-

The Result: The agent autonomously decides: "I will delete this transient log to save space, but I will consolidate this specific user preference into my long-term core."

Why Memory Will Define the Next Era of Agentic AI

As we move toward the next era of Agentic AI, a fundamental truth is emerging: Intelligence is not just about reasoning; it is about continuity. We have spent the last few years perfecting the "reasoner"—making LLMs more logical, faster, and more capable of complex thought. But a brilliant mind without a memory is like a supercomputer that reboots every five minutes. It can solve an equation, but it cannot build a career.

This is why Memory Management is shifting from a "nice-to-have" feature to the core infrastructure of the Agent Runtime Environment (ARE).

Memory as the Anchor of Trust and Autonomy

In the enterprise, the transition from "tools" to "digital workers" hinges entirely on the sophistication of the memory layer. Here is why memory will define the next phase of our journey:

State Governs the Narrative

In any long-running project, the "state" is the current version of the truth. Without memory, an agent loses the narrative. It might successfully complete a sub-task but fail the overall mission because it forgot the constraints established three days ago. By maintaining a robust state, the ARE ensures that the agent’s actions remain aligned with the overarching "story" of the project.

From Reactive to Proactive Evolution

True autonomy requires an agent to adapt its behavior based on past outcomes. Memory allows an agent to move beyond a static "system prompt" and into an evolutionary lifecycle. When an agent remembers that a certain approach failed in a specific environment, it doesn't just "retry"—it pivots. This ability to learn from experience is what will eventually allow agents to handle the "edge cases" that currently require human intervention.

Real Personalization is Hard-Coded in Memory

Personalization is often dismissed as a UX "fluff" feature, but in Agentic AI, it is a functional requirement. If a DevSecOps agent doesn't remember your specific security protocols or your team's unique naming conventions, it becomes a liability. Memory allows the ARE to "hard-code" these nuances into the agent's worldview, making it a seamless extension of the existing human team.

As we continue to build out the Agent Runtime Environment (ARE), we must treat memory not just as a storage problem to be solved with a database, but as a cognitive orchestration problem. The maturity of your memory management will directly determine whether your agents are temporary consultants or permanent, evolving digital assets.

Conclusion: Memory as the Lifeblood of Agent Runtime Environments

As we have explored in this third installment, memory in an Agent Runtime Environment (ARE) is far more than a technical storage requirement. It is the cognitive lifeblood that allows an agent to transcend the limitations of a stateless model.

In a robust ARE, memory acts as:

- The Engine of Experience: Carrying the lessons of past successes and failures forward to optimize future actions.

- The Archive of Identity: Building a consistent "worldview" and situational context that remains stable across long-running projects.

- The Foundation of Continuity: Enabling a brand of intelligence that doesn't just react to prompts, but evolves alongside the user and the organization.

Designing memory architectures — with the right types, lifecycle policies, and retrieval strategies — turns static agents into adaptive, intelligent systems capable of real-world reliability.

In Part 4 of this series, we’ll explore Memory Operationalization — including tooling, indexing frameworks, and cost-performance tradeoffs in production ARE deployments.

References & Further Reading

- https://agenticaimasters.in/agentic-ai-architecture/

- https://agentic-design.ai/patterns/memory-management

- https://www.ibm.com/think/topics/ai-agent-memory

- https://mljourney.com/memory-management-in-agentic-ai-agents/

- https://arxiv.org/abs/2506.06326

- https://www.jit.io/resources/devsecops/its-not-magic-its-memory-how-to-architect-short-term-memory-for-agentic-ai

- https://mljourney.com/memory-management-in-agentic-ai-agents/

- https://randeepbhatia.com/reference/agent-memory-architectures

- https://arxiv.org/abs/2601.08323

- https://memgpt.ai/

- https://github.com/getzep/zep

- https://www.google.com/search?q=https://python.langchain.com/docs/modules/memory/

- https://www.youtube.com/watch?v=W2HVdB4Jbjs

- https://www.youtube.com/watch?v=52xTxeqT4ws

- https://www.youtube.com/watch?v=52xTxeqT4ws

- https://arxiv.org/abs/2309.02427

- https://www.google.com/search?q=https://www.pinecone.io/learn/llm-long-term-memory/

- https://arxiv.org/abs/2310.08560

- https://www.griddynamics.com/blog/agentic-ai-deployment

- https://www.youtube.com/watch?v=WsGVXiWzTpI

- https://www.theregister.com/2026/01/28/how_agentic_ai_strains_modern_memory_heirarchies/

- https://pub.towardsai.net/how-to-design-efficient-memory-architectures-for-agentic-ai-systems-81ed456bb74f

- https://www.falkordb.com/blog/vectorrag-vs-graphrag-technical-challenges-enterprise-ai-march25/

- https://github.com/Sean-V-Dev/HMLR-Agentic-AI-Memory-System

- https://redis.io/blog/build-smarter-ai-agents-manage-short-term-and-long-term-memory-with-redis/

- https://vardhmanandroid2015.medium.com/beyond-vector-databases-architectures-for-true-long-term-ai-memory-0d4629d1a006

- https://www.mdpi.com/1999-5903/17/9/404

- https://arxiv.org/pdf/2502.12110

- https://arxiv.org/abs/2512.13564

Disclaimer: This post provides general information and is not tailored to any specific individual or entity. It includes only publicly available information for general awareness purposes. Do not warrant that this post is free from errors or omissions. Views are personal.